Getting started with Google Cloud Datastore

Whether or not you are a heavy user of Google's services, there are solid reasons to get to know Google Cloud Datastore:- for humble usage it's free, and tolerably priced for modest needs

- it's JSON. If you code in JavaScript or Google Apps Script, your data goes in and comes back at you with little effort in marshalling or object relational mapping. You even get a query language that returns JSON.

- ScriptDB is going away. If you used it, you need a replacement

- arguably it offers the highest availability and reliability you'll get for the price.

- after the initial hurdle of wrapping your mind around the way it works, there's not much more to learn

- it gets you going with NoSQL (it's about time, right?)

Down side:

- there's no internal Google Apps Script internal API yet, (stuck with UrlFetchApp.fetch() for now)

- it's not even a little bit forgiving - nothing works until you've got everything right.

- OAuth is obligatory

- there aren't a lot of people using it yet, so not a lot of docs or Q&A forums available yet

- the initial hurdle is a pain.

So the purpose of this post is :

"Provide a complete step by step guide for using

Google Apps Script to query Google Cloud Datastore."

Google Apps Script to query Google Cloud Datastore."

Hopefully, with this blog post, we'll remove all of the down side so you can jump right in and get straight to work.

Specifically, we're going to use Google Datastore as a source of values for a Google Apps Script web app. It doesn't have to be a web app permanently, but needs to be for the initial set up phase,

Let's say you have many users making their own spreadsheets and need a central source for logged data: temperature and pressure readings of a manufacturing process for example. It's a simple table but too many rows for a spreadsheet. Their spreadsheet could access the script through a simple local formula, as we will demonstrate.

Let's say you have many users making their own spreadsheets and need a central source for logged data: temperature and pressure readings of a manufacturing process for example. It's a simple table but too many rows for a spreadsheet. Their spreadsheet could access the script through a simple local formula, as we will demonstrate.

We're going to want :

- tick : time stamp

- chamber : string

- temp : number

- press : number

So here following is our procedure ...

For a quick overview, you can watch me do it for you first in the accompanying YouTube video.

Develop our Google Apps Script client for Google Cloud Datastore

Obtain the starter script

The next steps begin with a small example script I have published, derived from Bruce McPherson's documentation.

Open this link and make your own copies of the files it contains.

"Temperature Pressure API End Point".

It looks like this :

Do the same with the spreadsheets, "Master: Temperature and Pressure Analysis" and "Master: Debugging Log". The latter spreadsheet contains an embedded script too. Probably, you'll also want to change its name, when you get to it.

Ignore any errors for now; we'll be repairing them as we progress through this guide.

* * * Leave these page's tabs open, we will be returning to them. * * *

Include the EzyOauth2 code library

Its Project ID is :

MSaYlTXSVk7FAqpHNCcqBv6i_d-phDA33

Follow these steps to install it in the script file, "Temperature Pressure API End Point". . .

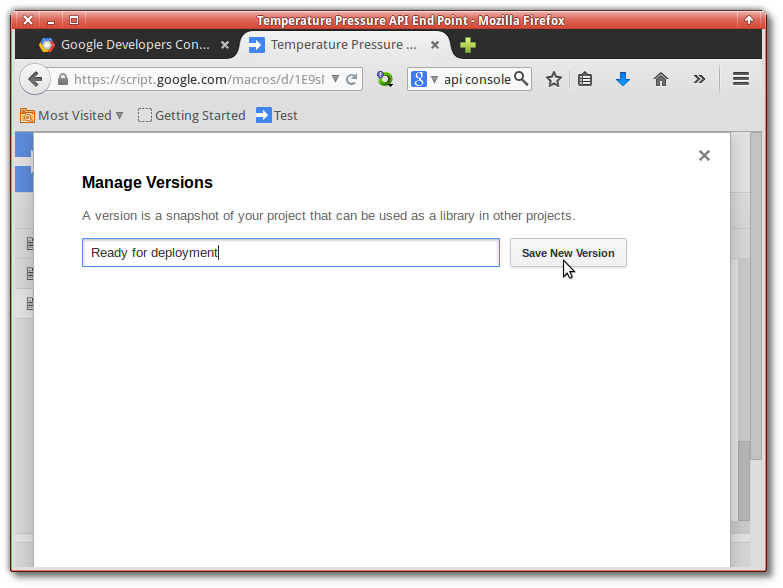

Prepare script for deployment

Web App scripts must conform to the GAS version control system before they can be exposed for use.

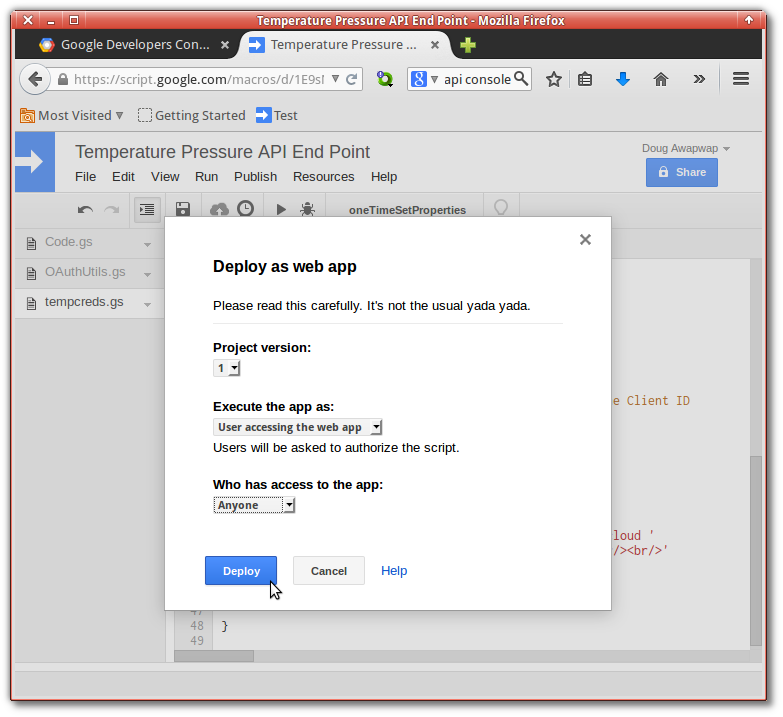

Once the version has been specified, we can deploy the application.

Choose the settings :

- User accessing the web app

- Anyone

For now, we are solely interested in the URL for "Test web app for your latest code". It is the development version and always up-to-date with the latest save. The other one is for the version controlled production web app. We'll publish that for use, when everything else is working.

* * * Leave this page's tab open, we will be returning to it. * * *

Prepare Cloud Datastore

Get to the Google API Console and log in :

Create a new project :

Activate Billing

Complete the billing page as appropriate ...

Select the APIs we intend to use ...

Prepare our project "consent screen"

Make it nice. This page should clearly communicate your details to the person who will be giving you permission to access their stuff. Email address and project name are the only obligatory bits of information, however.If you skip this step you will have problems later on ...

Prepare our first data entity

Under the Storage menu, turn to the Cloud Datastore section and open the subsection Query:

We create our first couple of entities:

We need two for the demo . . .

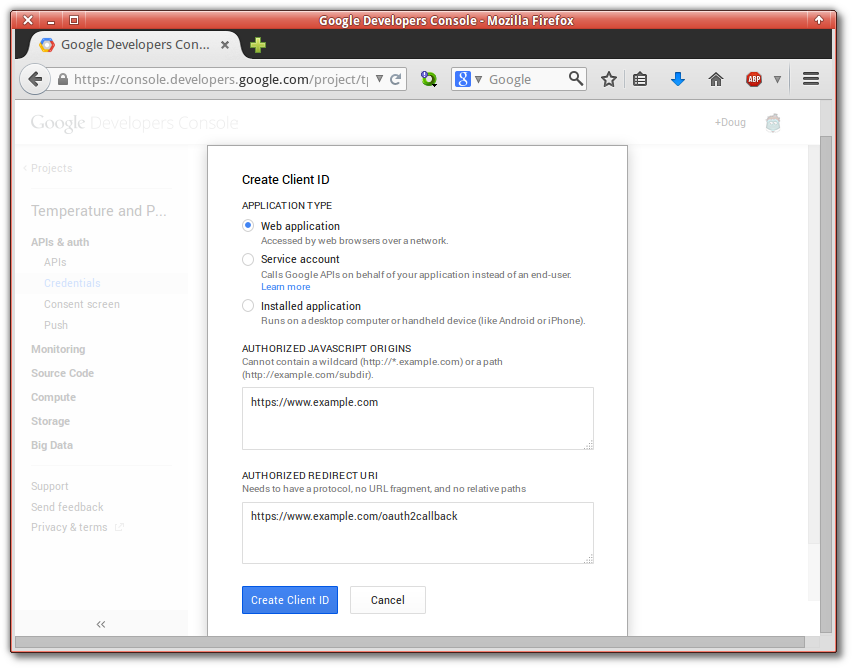

Establish the project credentials we'll use in OAuth dialogues.

We must create a "Client ID for Web Applications"

Return to the screen reached earlier that looks like this :

The URL taken above is required in the field, "Authorized redirect URI".

Get your Google Client ID from the Console

Get the Project ID from Google Console project overview :

The Project ID is used as the retrieval key for the Client Id we'll be keeping in the scripts properties :

Take the settings of the other constants from the Cloud Datastore according to the relations shown in this image :

For testing we need to know the "id" numbers of the two entities we created :

If you are following this guide exactly, except for your entity ids, which are generated automatically, your constants should look like this :

Much earlier above, we already obtained the credentials in a JSON file and used them to initialize the constant, serviceCreds.

But we don't want that kind of thing lying around in a script so, an urgent thing we need to do is push our OAuth credentials into the properties register of our script, and remove them from the text of the script. There is a function just for that, but let's look at the current condition of our properties ...

Initially you will have no properties set . . .

Executing the function oneTimeSetProperties pushes the required bits of the JSON data into the properties storage of our script ...

The script now holds a permanent private record of our OAuth credentials. Subsequent executions that use EzyOauth2 will augment the property record with an Access Token and a Refresh Token.

Our application will now reply correctly to OAuth challenges from Google.

Disable the temporary doGet and enable the definitive one.

It's usage is as indicated here ...

Another thing we want is an execution log. A spreadsheet is a good place to keep it so we will specify a place to write to and then enable debugging ...

We need to make the identifier of the target sheet explicit, too ...

Finally, we must enable debug logging ...

Now, we need to obtain the web page URL opened earlier from the menu "Publish" » "Deploy as web app" . . .

Open the query URL . . .

Execution results appear in the Debugging Logger spreadsheet :

As indicated in the introduction, a spreadsheet can also pull the data using a custom formula:

This error indicates that an attempt has been made in a spreadsheet formula, to make a custom script write to a spreadsheet. To avoid, infinite circular recalculations from spreadsheets to scripts and back to spreadsheets again, this is prohibited.

In this case, it is caused by our logging capability introduced earlier. We have to turn it off, before we can get past that error ...

In this case, it is caused by our logging capability introduced earlier. We have to turn it off, before we can get past that error ...

Let's make the formula recalculate :

Conclusion:

If you got through all of the above successfully, you should now have a solid starting point for easily moving data around between Google Cloud Datastore and Google Spreadsheets using Google Apps Script.

You will have seen that Google affords Apps Script internal access to Sheets, but not to Cloud Datastore. Instead, you have to loop out of Google and back in again, using UrlFetchApp.fetch(), with all the concomitant hardship of configuring OAuth. When will that change?

Even if it does change, you still have OAuth capability in your armoury, for connecting to Twitter, Instagram or any of the thousands of others.

You also have a quick introduction to the steps involved in using HTTP to access Google's new Cloud Datastore. With a working base from which to get started, subsequent steps ought to be straightforward.

Even if it does change, you still have OAuth capability in your armoury, for connecting to Twitter, Instagram or any of the thousands of others.

You also have a quick introduction to the steps involved in using HTTP to access Google's new Cloud Datastore. With a working base from which to get started, subsequent steps ought to be straightforward.